GARCH Model of Volatility

Level 2 - Intermediate Vol Trader

Why Volatility Clusters: A Walk Through GARCH

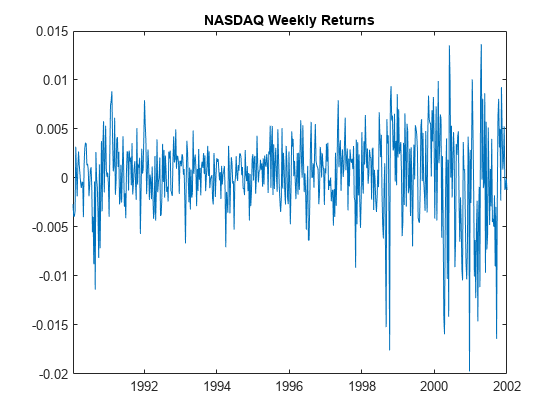

Pull up a long-dated chart of NASDAQ returns — say, the last thirty years — and squint at it. Don’t look at the price. Look at the jitter. Look at how violently the daily moves expand and contract over time.

What you’ll notice is that the chart doesn’t behave like a steady drumbeat. It looks more like weather. There are long stretches where the market seems to barely breathe — narrow daily moves, gentle drift, the kind of placid tape that lulls portfolio managers into thinking risk has been solved. And then, almost without warning, the lid comes off. The same index that was meandering by 0.3% a day starts swinging 3%, 4%, 5%. The storm settles in for months, sometimes years, before the calm returns.

Two things should jump out:

First, volatility is not constant. It changes with time. In statistical language, it is stochastic — itself a random variable, not a fixed parameter you can look up in a textbook. The simple assumption every introductory finance course leans on — that returns are drawn from a normal distribution with a stable standard deviation — is a useful fiction. In the real world, that standard deviation is alive and moving.

Second, and more interestingly, today’s volatility looks an awful lot like yesterday’s. Quiet begets quiet. The mid-1990s (roughly 1992 through 1998) were a long, gentle hum. Storms beget storms. The dot-com unwind from 1998 through 2002 was a sustained period of elevated turbulence where one wild day was almost always followed by another. In 2008 it happened again. In March 2020 it happened again.

This is the property that statisticians call autocorrelation in volatility — the regression of a variable on a lagged version of itself. Less formally, it’s the reason traders talk about volatility clustering. Volatility doesn’t sprinkle itself uniformly across the calendar like raisins in a scone. It bunches up.

The puzzle this creates

Once you accept that volatility clusters, you have a problem. The classical toolbox of finance — mean, variance, the bell curve — assumes the world is well-behaved in a particular way. It assumes that the size of tomorrow’s surprise is drawn from the same distribution as the size of last Tuesday’s surprise, and last June’s, and the surprise from three years ago. Statisticians call this assumption homoskedasticity: equal scatter, equal variance, across time.

But the NASDAQ chart is screaming the opposite. The scatter is unequal across time. There are calm regimes and turbulent regimes, and they persist. The data is heteroskedastic — different scatter in different periods — and crucially, the heteroskedasticity is predictable, because volatility today carries information about volatility tomorrow.

For decades, this was treated as an annoying empirical wart on the elegant theoretical models. Then, in 1982, Robert Engle proposed a way to actually model it.

ARCH: yesterday’s surprise predicts today’s variance

Engle’s idea, which would eventually win him the 2003 Nobel Prize in Economics, was disarmingly simple. What if today’s variance isn’t a fixed number, but a function of recent shocks?

He called it ARCH — Autoregressive Conditional Heteroskedasticity. The name is a mouthful, but the components are doing intuitive work:

Autoregressive: today depends on yesterday.

Conditional: variance is conditional on what just happened, not assumed in advance.

Heteroskedasticity: variance changes over time.

Strip the jargon and ARCH says this: the bigger yesterday’s unexpected return was, the bigger we should expect today’s variance to be.

That’s it. If the market got slapped with a 4% surprise yesterday, ARCH says the distribution from which today’s return is drawn has a fatter standard deviation than it would have had after a sleepy 0.2% day. The model is essentially a feedback loop where shocks beget more potential for shocks.

It worked. Empirically, ARCH captured the clustering in equity returns, exchange rates, commodities — markets across the board.

GARCH: variance has memory of itself

Four years later, in 1986, Tim Bollerslev proposed a generalization. Engle’s ARCH said today’s variance depends on yesterday’s surprise. Bollerslev said: that’s true, but it’s also true that today’s variance depends on yesterday’s variance itself.

This is the GARCH model — Generalized ARCH. It adds one more ingredient: variance has its own memory, separate from the memory of individual shocks. Today’s variance is a weighted blend of three things:

A long-run baseline level of variance (the regime average, where things eventually revert to).

Yesterday’s surprise (the ARCH part — sharp shocks lift expected variance).

Yesterday’s variance itself (the new GARCH part — if vol was already elevated, it stays sticky).

That third ingredient is what makes GARCH so good at capturing real markets. It explains persistence. A single 4% day doesn’t just elevate today’s expected vol and then snap back to normal tomorrow; it elevates a state variable — variance itself — that decays slowly. Storms have inertia. So do calms.

The math is more involved than ARCH, but the intuition is what matters: variance is sticky, and GARCH is the simplest equation that takes that stickiness seriously.

What traders actually do with this

Here is the part that I think is underappreciated by people learning this material from textbooks. Most discretionary options traders have never written down a GARCH equation in their lives. And yet they use it constantly.

Nassim Taleb makes this point in Dynamic Hedging — that experienced options traders are, in his phrase, “calculating GARCH in their head.” They look at the time series of an asset’s prices and they can see the regimes. They feel where vol is clustered high and where it’s clustered low. They notice when a sleepy market just delivered a fat-tailed day and adjust their pricing of optionality upward, not because a model told them to, but because their pattern recognition is doing the same job the model is doing — weighting recent shocks, weighting the prevailing regime, expecting persistence.

This is, I think, the right way to hold the relationship between the model and the practice. GARCH didn’t invent volatility clustering. Bollerslev didn’t teach the market that storms persist. The model is a mathematical formalization of something traders have known in their bones for as long as there have been markets — that risk has a memory, and the recent past is the best guide to the near future.

What Engle and Bollerslev did was give us a way to write that intuition down, estimate it from data, and bolt it onto the rest of finance — option pricing, risk management, capital allocation — so that those frameworks could finally start treating volatility the way the tape has always behaved: alive, moving, and clustered.